SREcon23 EMEA: Should I Use OTel (collectors) or Is Prometheus Good Enough?

Abstract

Thursday, 12 October, 2023 - 09:00–09:40 Krisztian Fekete, solo.io

Everyone is talking about OpenTelemetry. It’s a popular topic, even though most people is not aware of the differences between OpenTelemetry tools, APIs, and SDKs, so participating in these conversation can be confusing at times.

Others think that OpenTelemetry (the collector, in this case) is a silver bullet, and everyone needs to remove all of their Prometheus instances, telemetry agent, and deploy OTel collectors everywhere. Are they aware of the functionalities they miss or the failure modes they will introduce? Not necessarily.

As a former SRE I monitored tens of millions of users with the traditional Prometheus stack. In my current position I am working heavily with OTel to design telemetry pipelines at scale with customers. The goal of this talk is to make it easier for SREs to make informed decisions about the pros and cons for the tooling they are considering using for the job at hand.

The talk

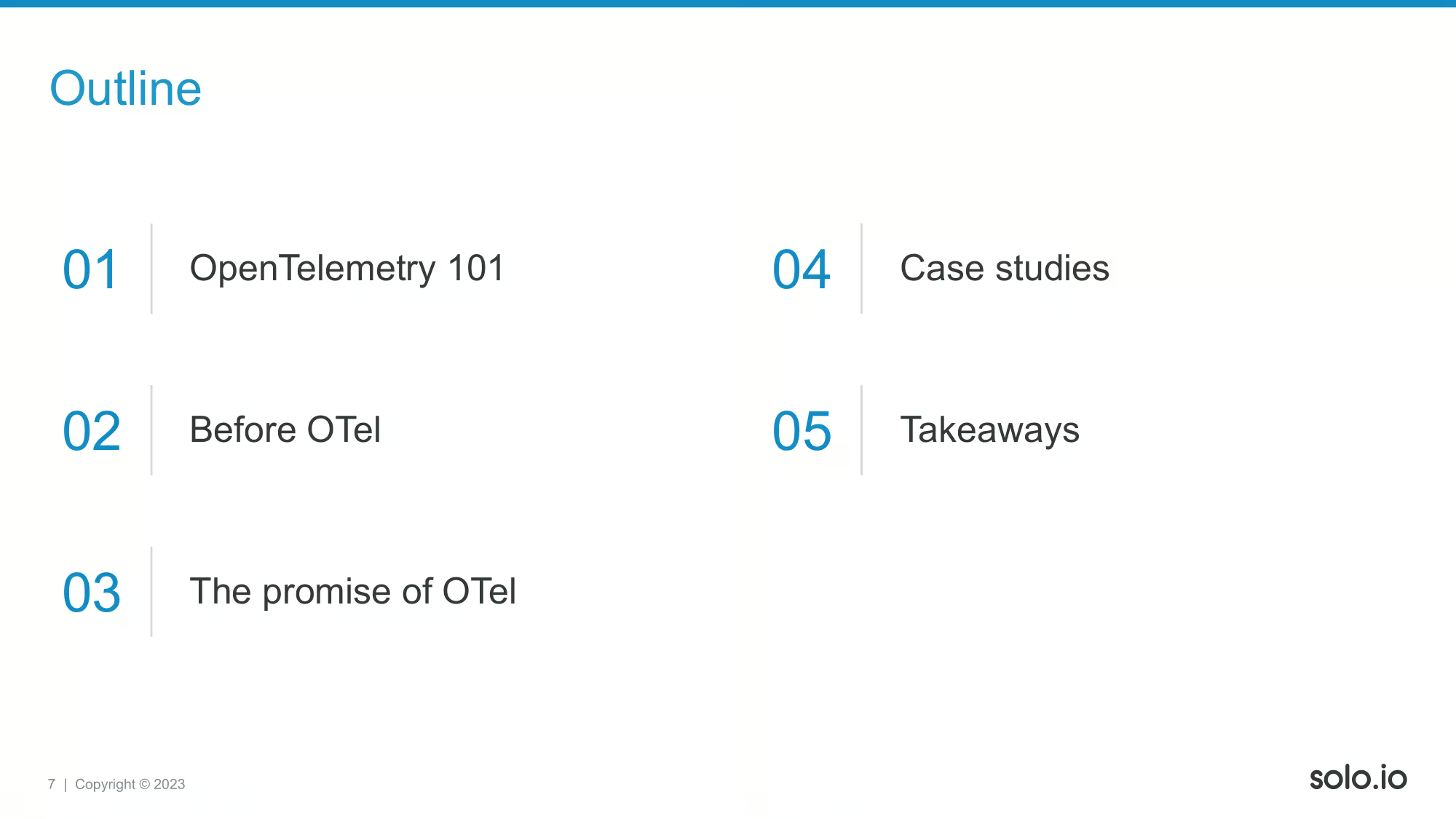

Hi everyone, my name is Krisztian Fekete, thank you for having me! And being here this early on the very last day! Today we are going to talk about why is it hard to articulate OTel concepts, and why it might be even harder to understand the trade-offs when someone asks: should I use OTel?

The answer is always a “maybe”, an “it depends”, as like with everything else in engineering, all choices come with trade-offs. To goal of this talk is that SREs can walk away being better informed about trade-offs, or at least have some questions to discuss with their teams while they are building out their o11y stacks.

My name is Krisztian Fekete, I am a former but to some extent lifelong SRE. Feel free to reach out with any questions you may have on the topics I will be discussing. I am more than happy to share my experiences opinions, and occasionally hard takes as well.

First, here’s some background on me. I was doing mostly the same stuff in all of my positions, but you can notice the how the name of this type of work has changed over the years. Apart from working on infrastructures, I was and I am mostly focusing on o11y. I started out with smallish video streaming clusters, then moved to a mix of VMs and cloud native infrastructurus serving millions of users at LastPass. This was the place where I first encountered with Istio, and later moved to solo.io - the Istio company. Here I am working as a Field Engineer which is mostly an equivalent of a Solutions Architect role. We help our customers do design their app networking stack, but due to the size of the company I am fortunate enough to be able to wear multiple hats, work with customers, do some o11y plumbing, build eBPF programs, or even contribute to our products’ design or implementation.

Here you can find some trivia on me. As I mentioned, I want to make SREs life easier, so please visit this Github link, and let Grafana Labs know you are interested in this feature!

Who is familiar with Solo.io? Who is using Istio? I introduced it previously as the “Istio company”, but what we are basically doing is making application networking really simple to our users. Our users are usually platform/DevOps/SRE engineers. We provide a unified solution to all problem that can arise in this space starting from API Gateways through Service Meshes right until GraphQL and Developer Portals and all the o11y + config mgmt tasks that are required to run this in production.

Let’s start out with a high level introduction to OpenTelemetry itself and see how term/technology can lead to confusion sometimes!

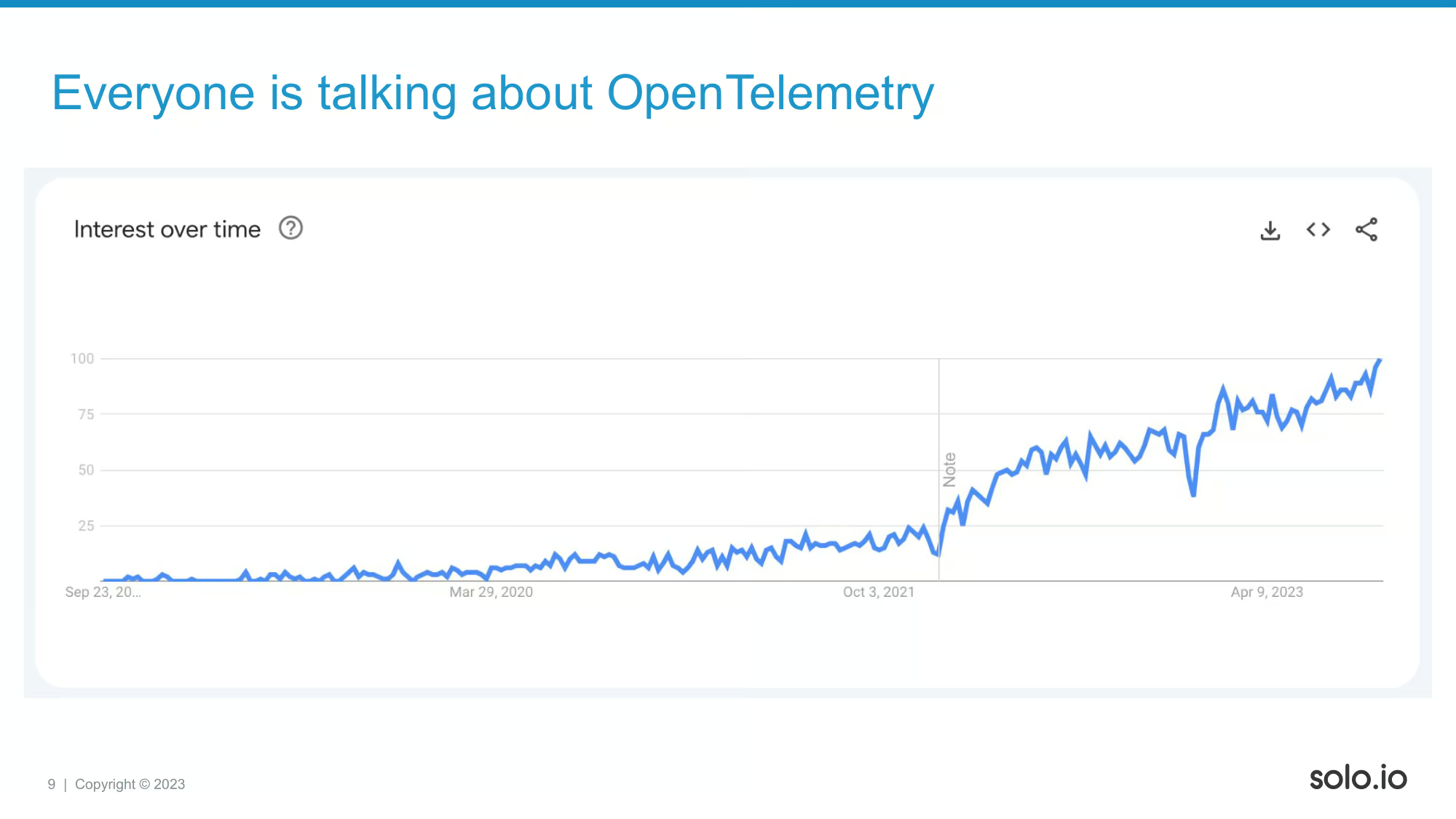

Everyone is talking about OTel, here you can see the corresponding Google Trends screenshot to give you some idea about its popularity. If anyone is aware of a better tool gauge the popularity of certain technologies, I’d like to know! I don’t really like this one!

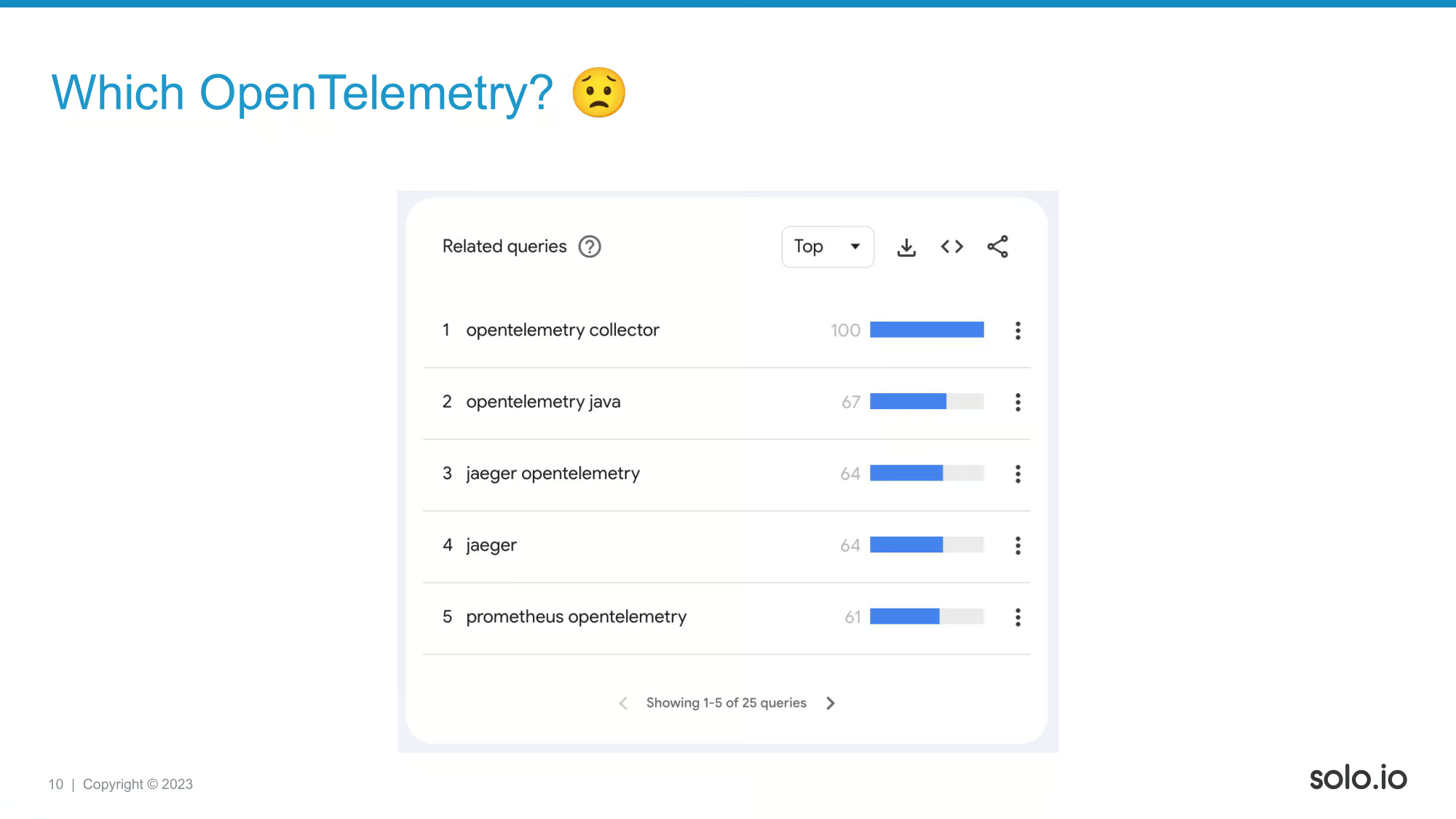

If we check the related queries that add all this graph up, we will see this. What we basically see here is that there are different ways to find OTel content. We have a collector, we have something Java specific, and Jaeger/Prometheus are also mentioned. What are all these? Let’s check the project’s own definition to see what OTel really is!

You can try to read this definition and make something out of it, but without any prior experience, this might not help too much. Also, based on my tests yesterday if you are sitting over half way back to the room, you probably can’t read this text.

People usually get confused by a definition like this. Let’s scroll down on the website!

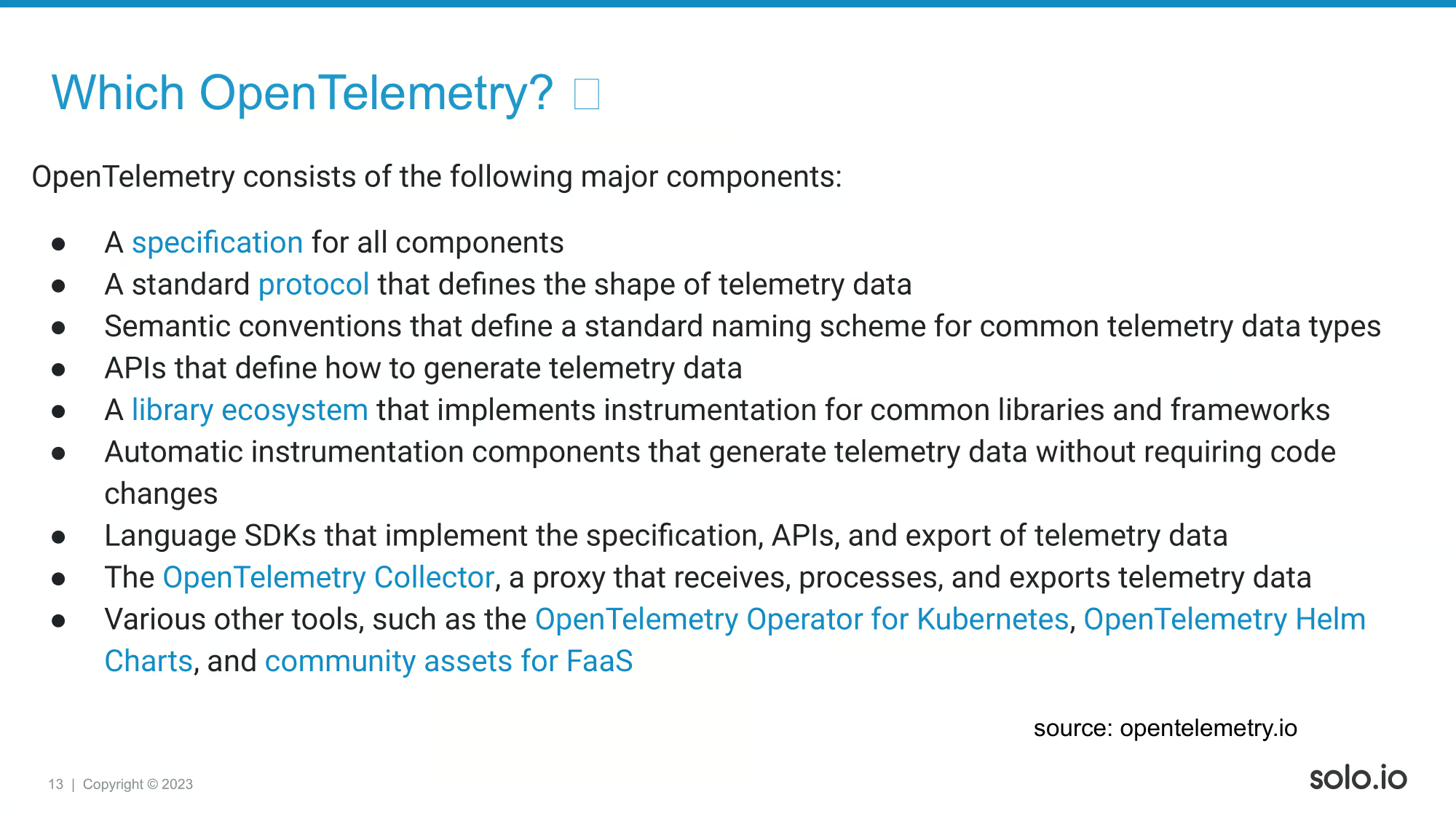

This is the section coming up right next to the definition of OTel. Does this help to clear up the confusion?

Not much clearer, eh? There can be engineers out there how give up after reading things like this. I personally wanted to try OTel out after a few months it was created and what I encountered was one of the most confusing docs I have ever seen.

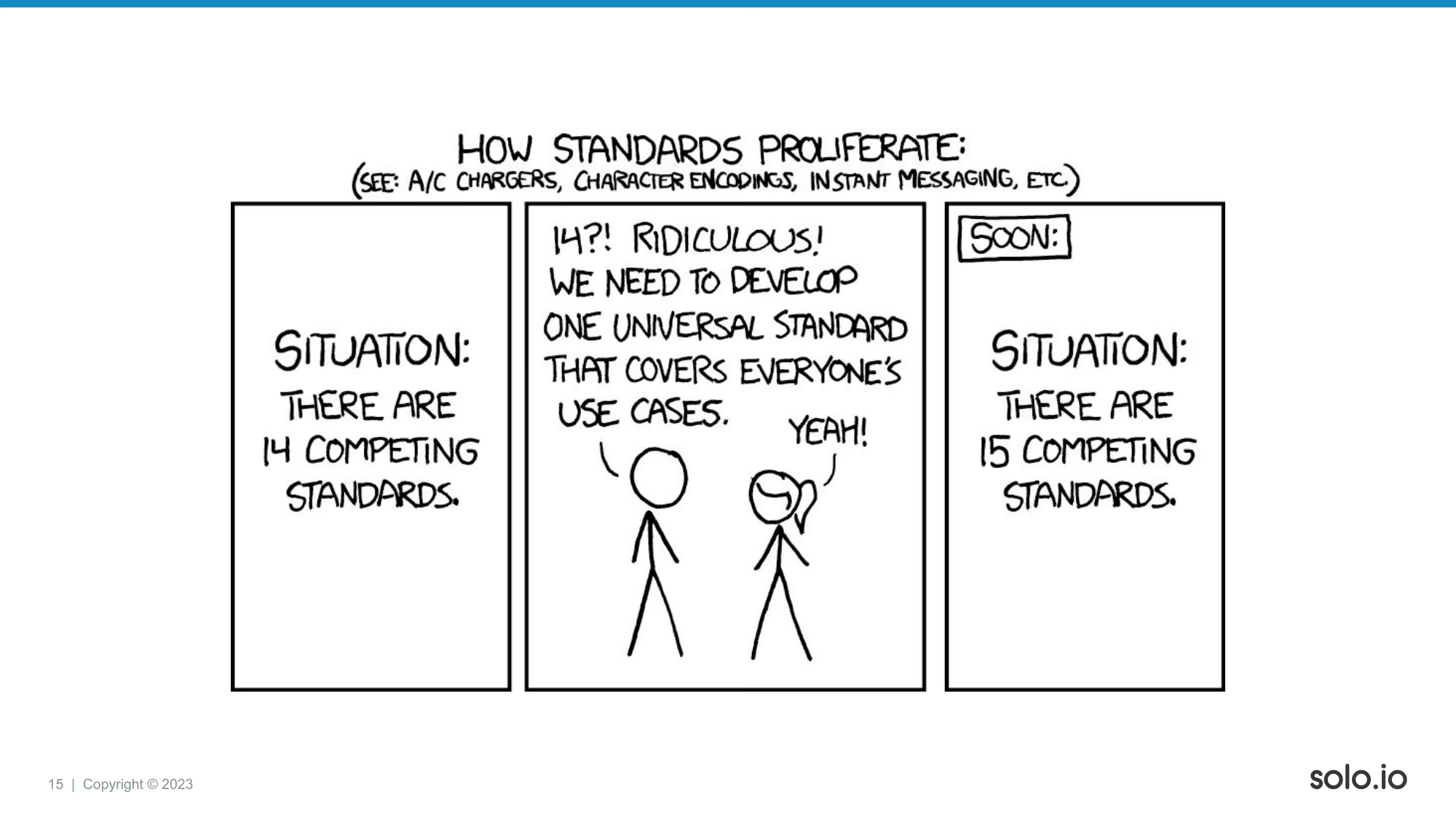

In the defense of all OTel developers: it’s hard to come up with a proper definition if you really want to be a swiss-army-knife and solve all kinds of different problems. This is the XKCD I was hesitant to include as I don’t think that OTel is inherently bad. I generally think that the Collector is more useful/production ready than the libraries, and in my talk I will be mostly focusing on that component.

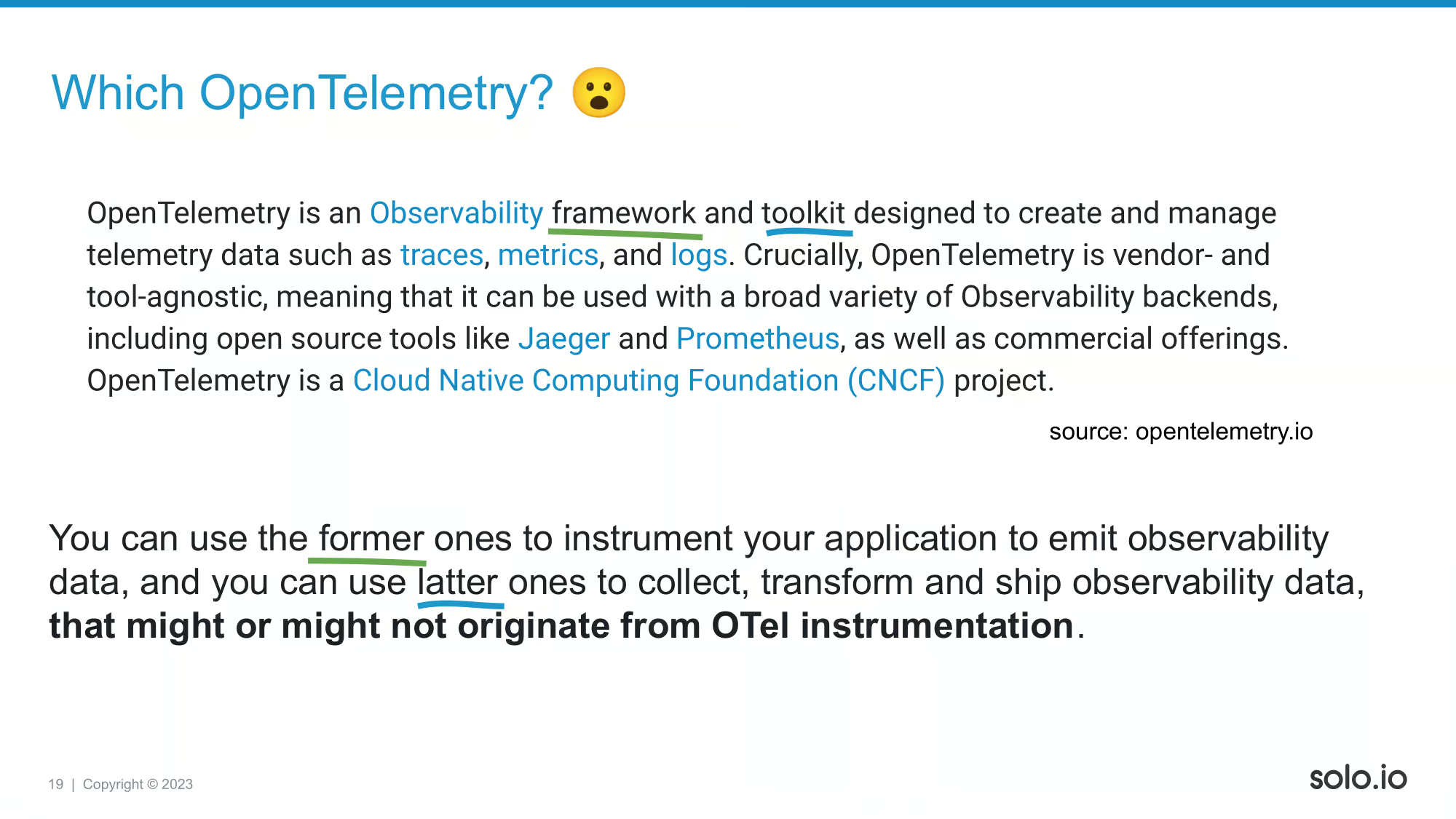

This is my own commentary on the definition.

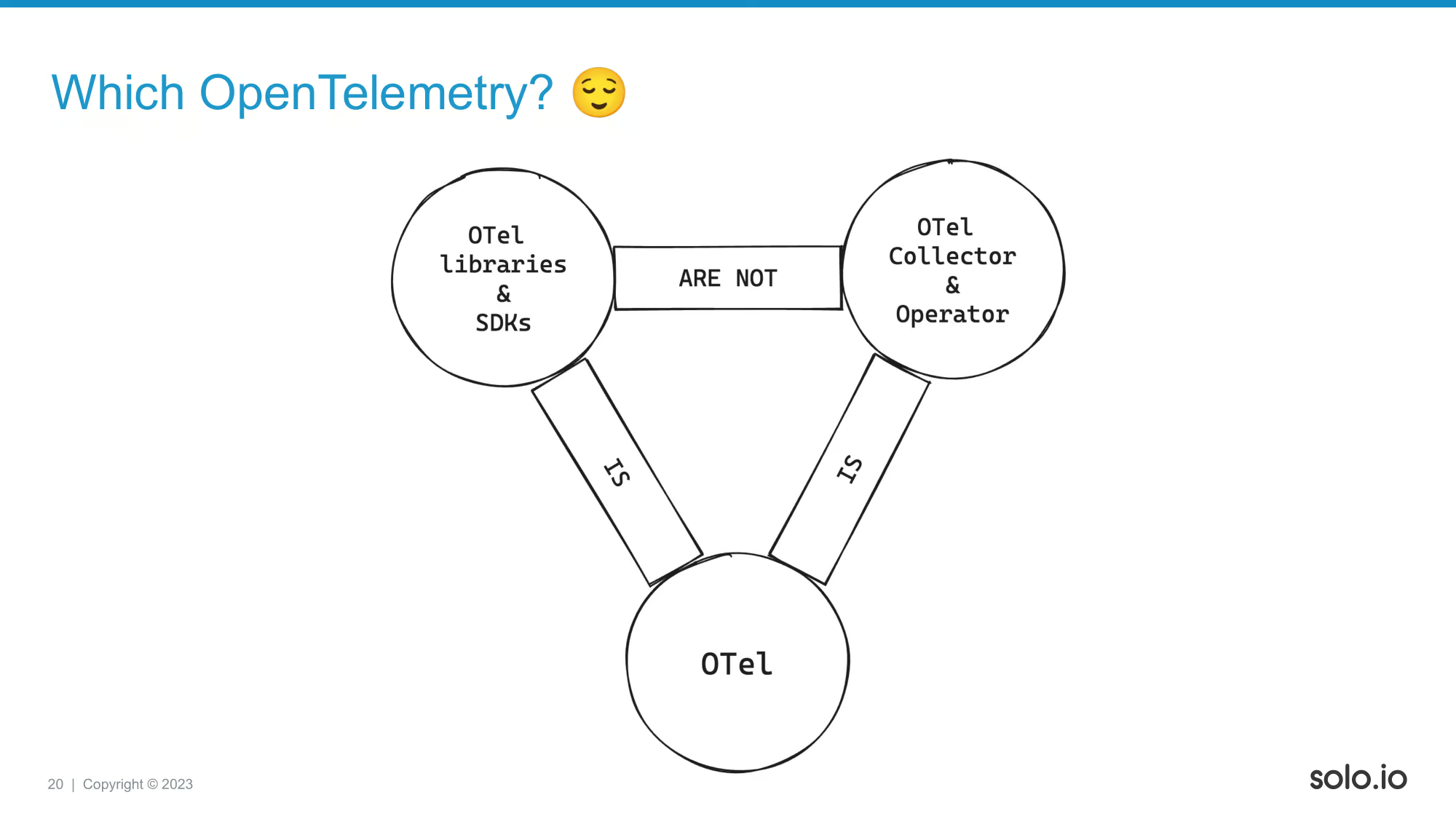

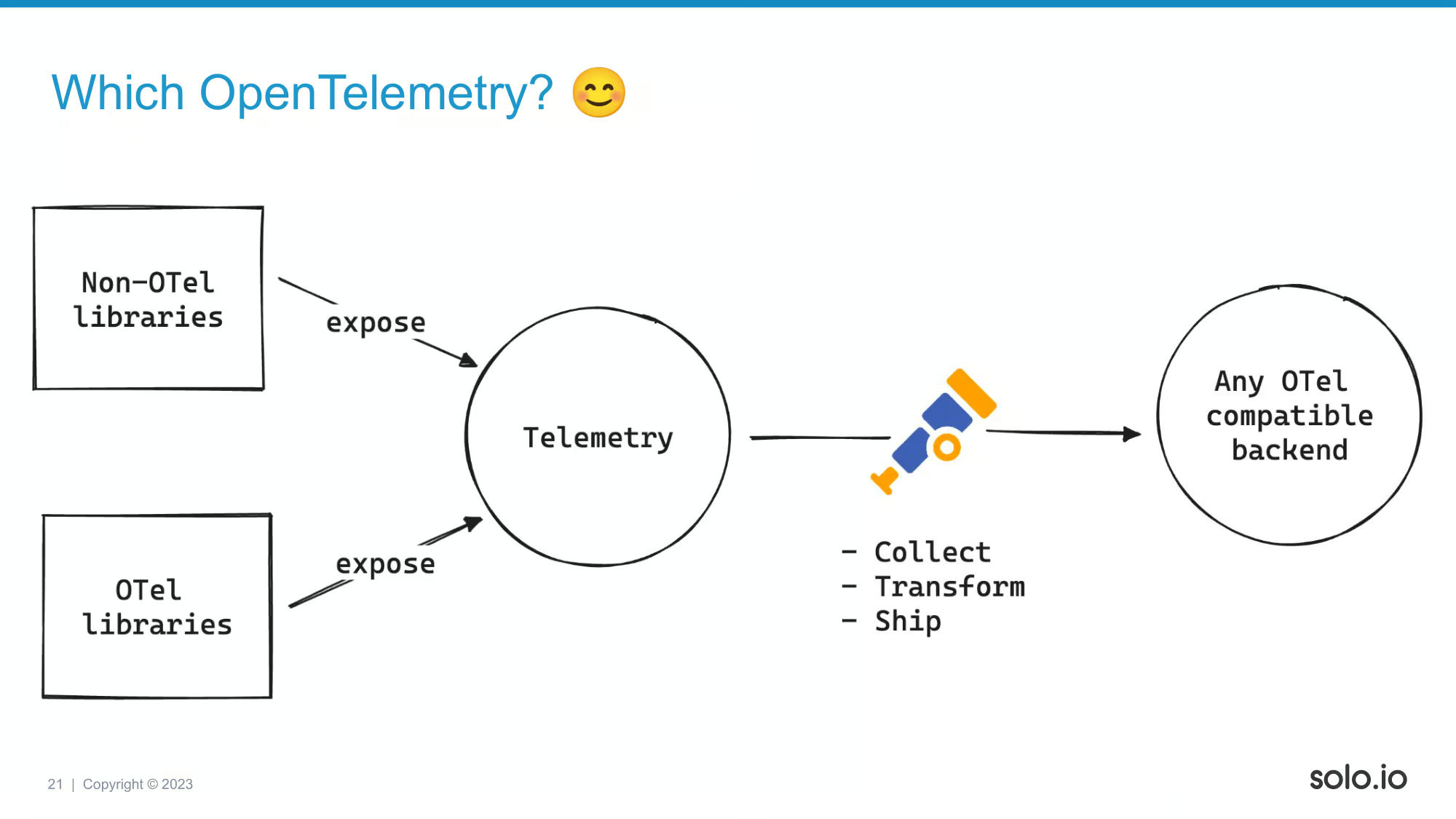

So what is important is that there are OTel things (libraries, SDKs) to instrument applications, and there are some OTel tooling (Collector, Operator). These are used for different things, although people can refer to both category of tools as OTel.

If someone says: “we have to be compatible with OTel” that does NOT necessarily mean that we have to use OTel libraries to instrument our services. In fact, I might not even recommend that as depending on what signal we are talking about, the existing libraries might be more mature than the OTel/swiss-army-knife libraries. This might change over time, but that’s my current assessment. Anyway, you can still use OTel collectors to ship and transform all this data.

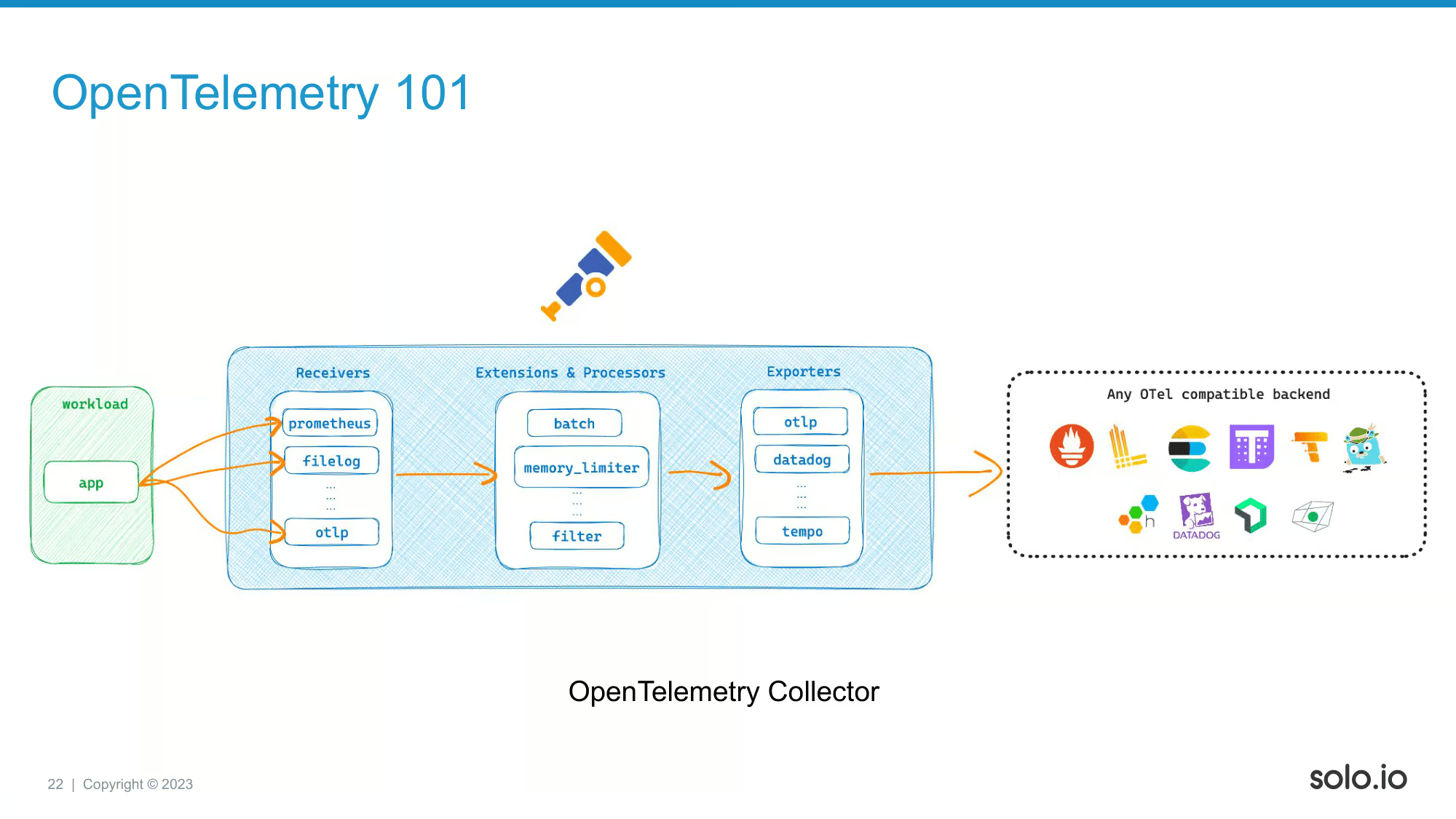

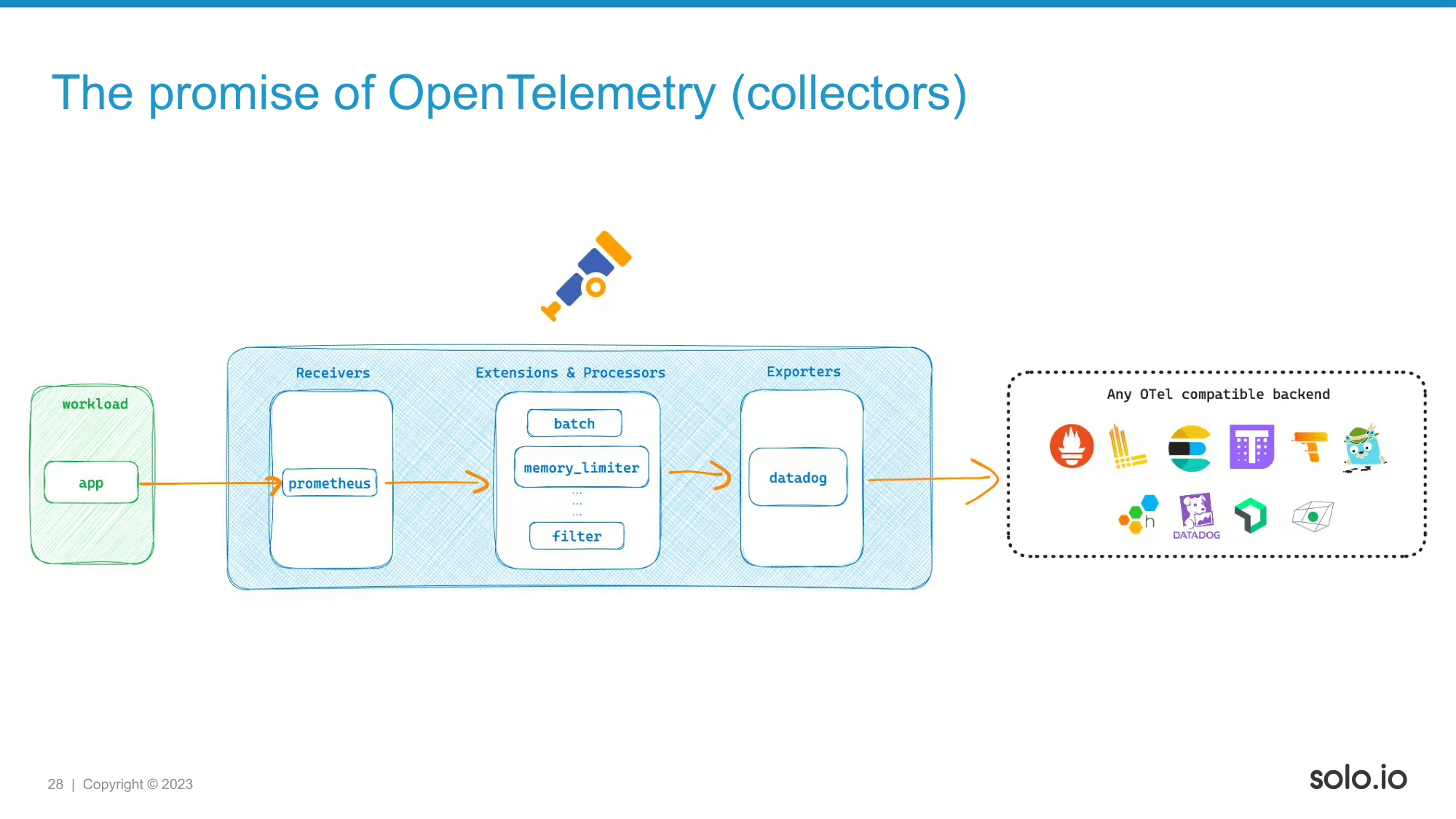

On this slide, you can see an example pipeline configuration for OpenTelemetry Collector. You have sources (receivers), then you do some processing (processors), and ship them to destinations (exporters).

Now, let’s see what the world looked like before having OTel collectors running around Kubernetes clusters.

Traditionally, people are running some combinations of these open-source components to collect metrics, logs, and traces. These are well adopted technologies, and do their job pretty well. They are different tools, with different approaches, they want to focus on their job, and they are quite good at that.

However, on the “dark side”, if you have a SaaS o11y vendor, and using something like Dynatrace, Splunk, etc, then the traditional way to collect telemetry is happening via using the vendor’s agents.

These agent were (are?) usually tedious to configure, had had vendor specific assumptions on the problems you were trying to solve, let’s just say in my experience, these agents all left something to be desired.

Now, let’s see how OTel can help!

This is a sample configuration of an OTel collector, collecting Prometheus metrics, doing some transformations, and sending the data to Datadog. It’s fairly similar to what you are also doing when you are using their own Agent to collect metrics and ship to the SaaS platform.

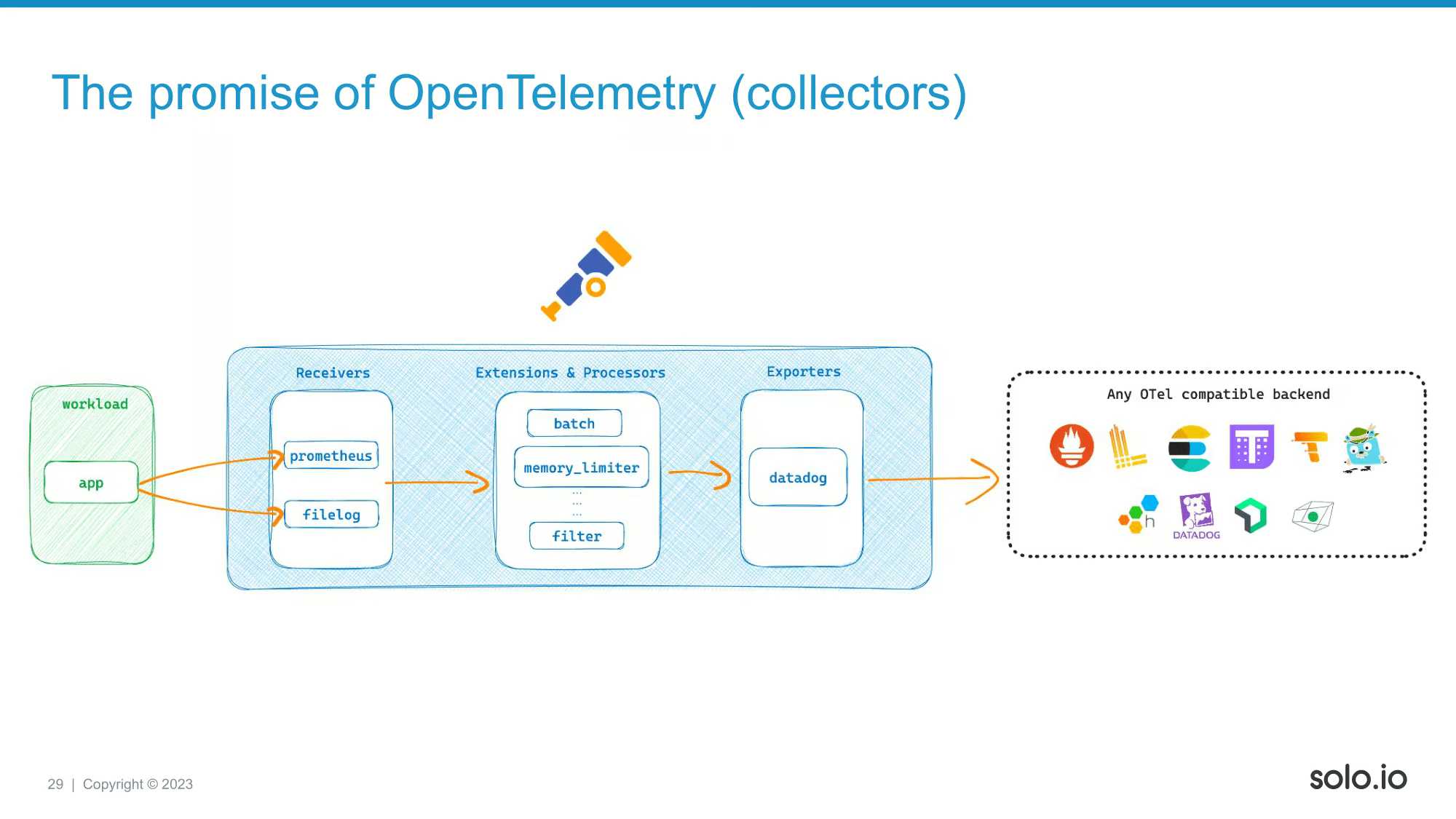

Now let’s take a look at this! We are adding a new receiver, and now we are also collecting logs, then pushing them to Datadog. We are using the very same collector, all we had to do was to change the configuration.

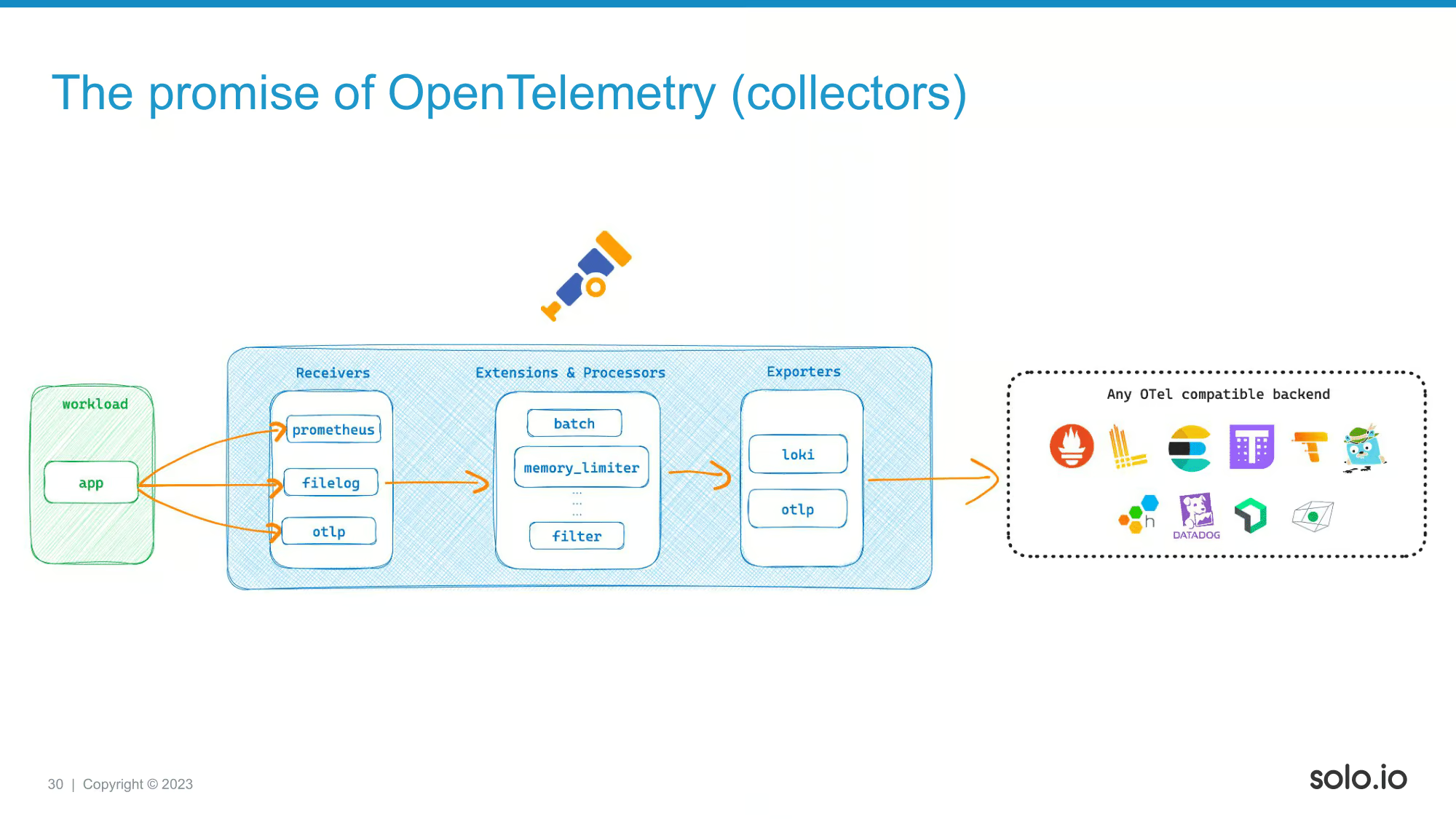

Now check this out! We added a another receiver called otlp, now we are also collecting traces. Great, but what is even more exciting is the exporters section. Notice that we are now pushing everything to different backends. Loki can be an OSS logging backend, otlp exporter can point to let’s say Tempo. So we can basically migrate to a different vendor, or even to OSS solutions without changing anything else except for the exporter section. Great isn’t it? The cost of migration is also greatly reduced if you are following this approach.

Now, let’s go trough some use-cases and see when it makes sense to use these new OTel based solutions for o11y problems!

If you have an ivory tower architect, making this proposal, then hopefully most of the SRE people on the team would look something like this. Some of them might not even understand how this is possible, the other half of them would just be confused about why are you considering changing what is working perfectly fine.

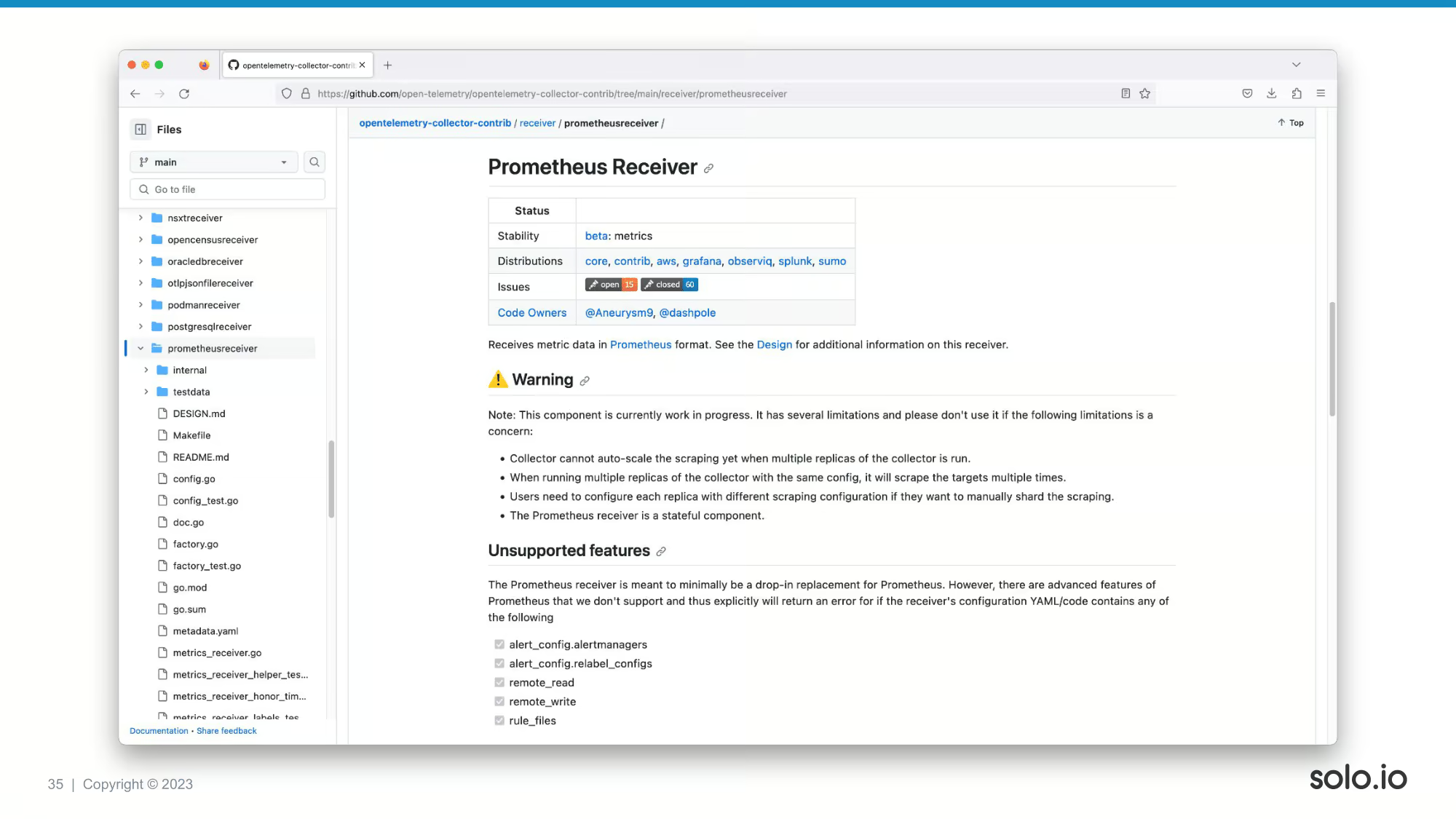

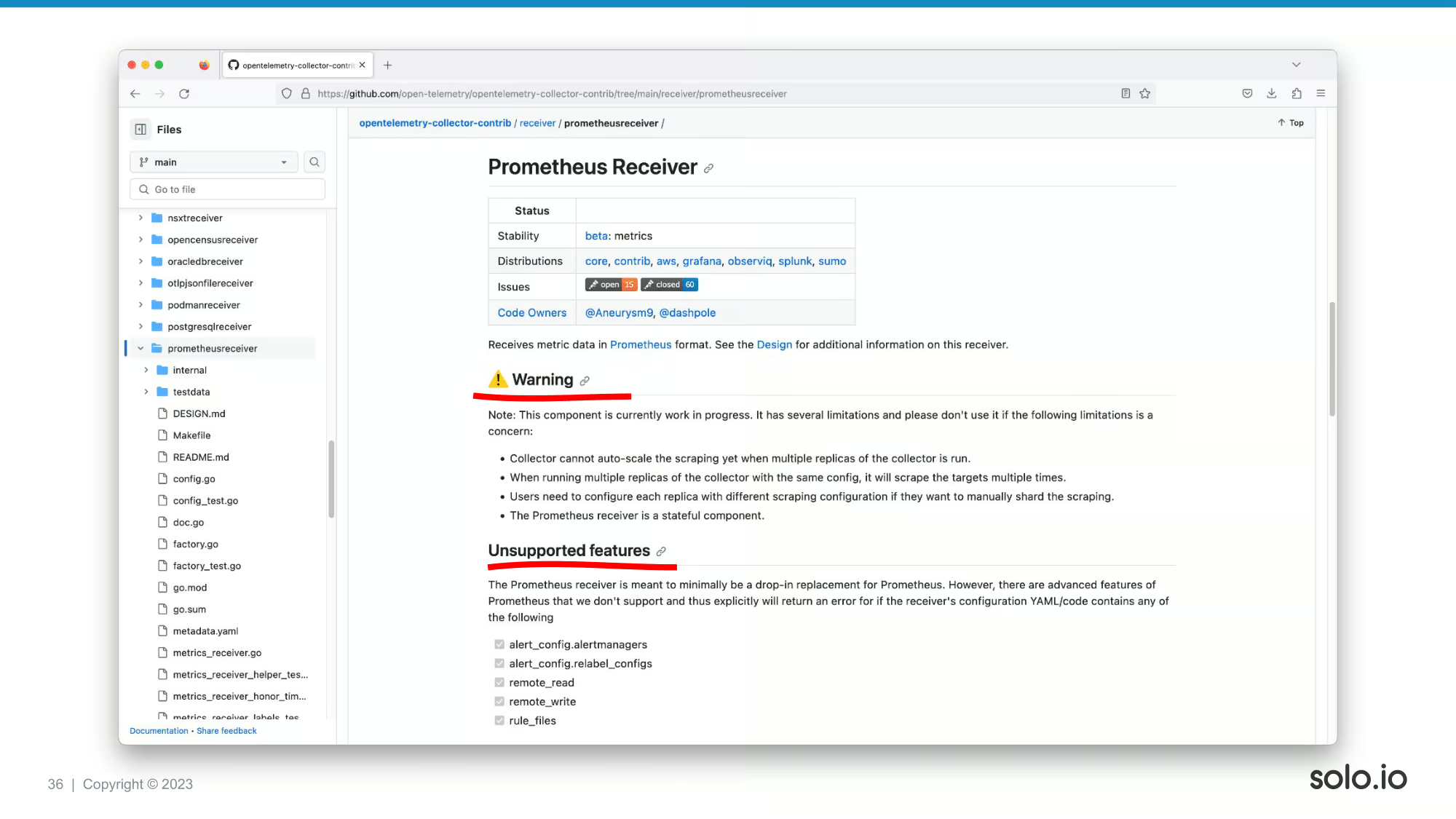

Technically, you can use the promtheusreceiver in OTel as an almost 100% replacement for Prometheus. However, it’s NOT a 100% equivalent, and there are some limitations you should be aware of.

You have scaling limitations, you cannot have the PromQL related capabilities, you don’t have alerting, and so on.

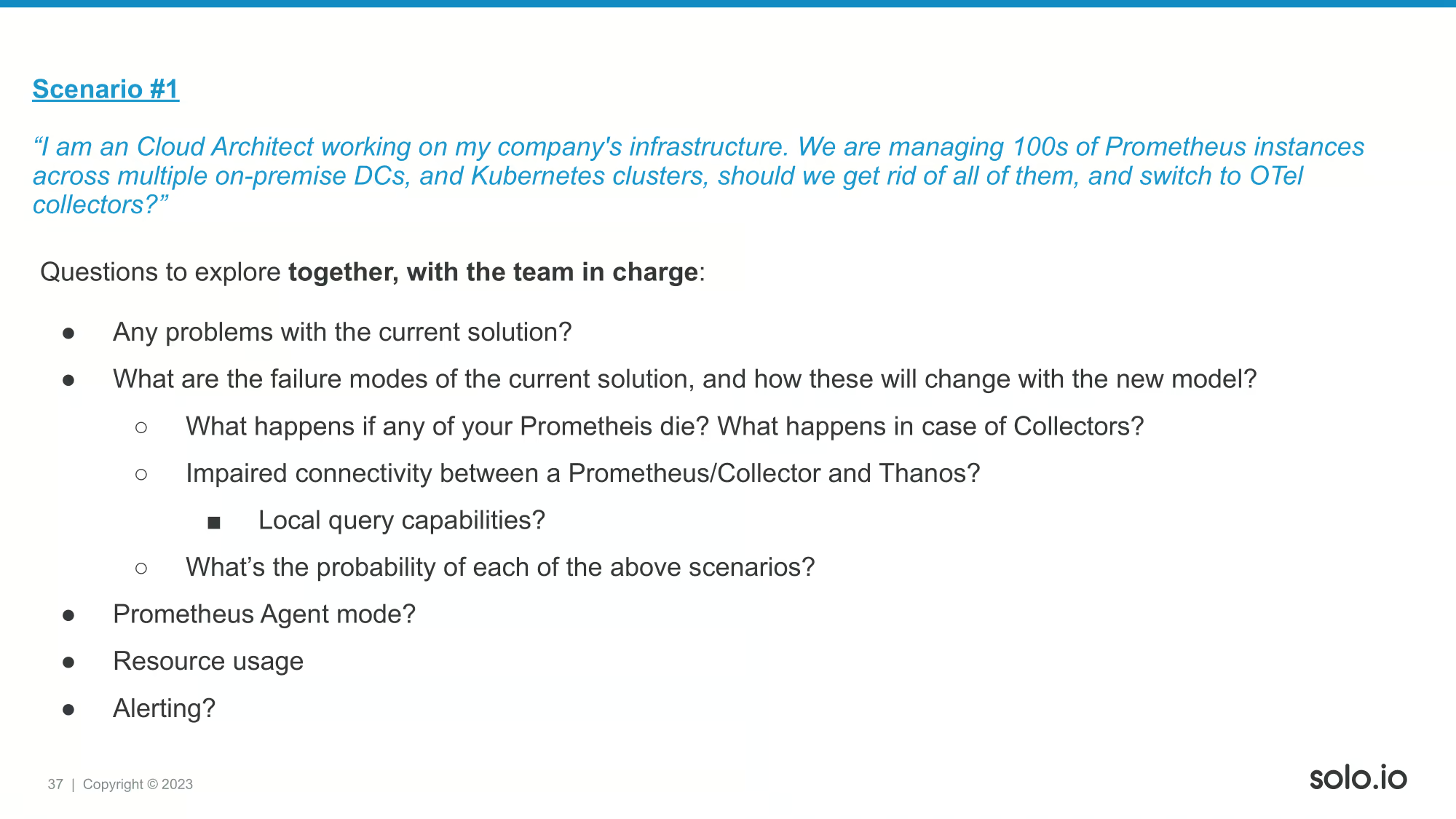

I recommend these questions to be discussed with team in charge of the platform.

My assessment that for this use-case is most of the time it doesn’t really make sense go full on OTel collectors. Even if you want to do that, you should be also aware of the functionalities you might be lacking afterwards and the new failure modes you are introducing into you system. Let’s say it’s a maybe, but it’s mostly a no.

Let’s see use-case #2!

In this case, we have some questions again to explore before making any decisions.

Generally, I’d recommend doing this. Even the o11y vendors are advocating to switch to OTel collectors, as that will benefit them as well if you want to migrate. You will also have benefits by doing so, so I’d say you cannot really make a bad decision by switching to OTel, but you have to make sure you have a migration planned properly!

Third and last use-case.

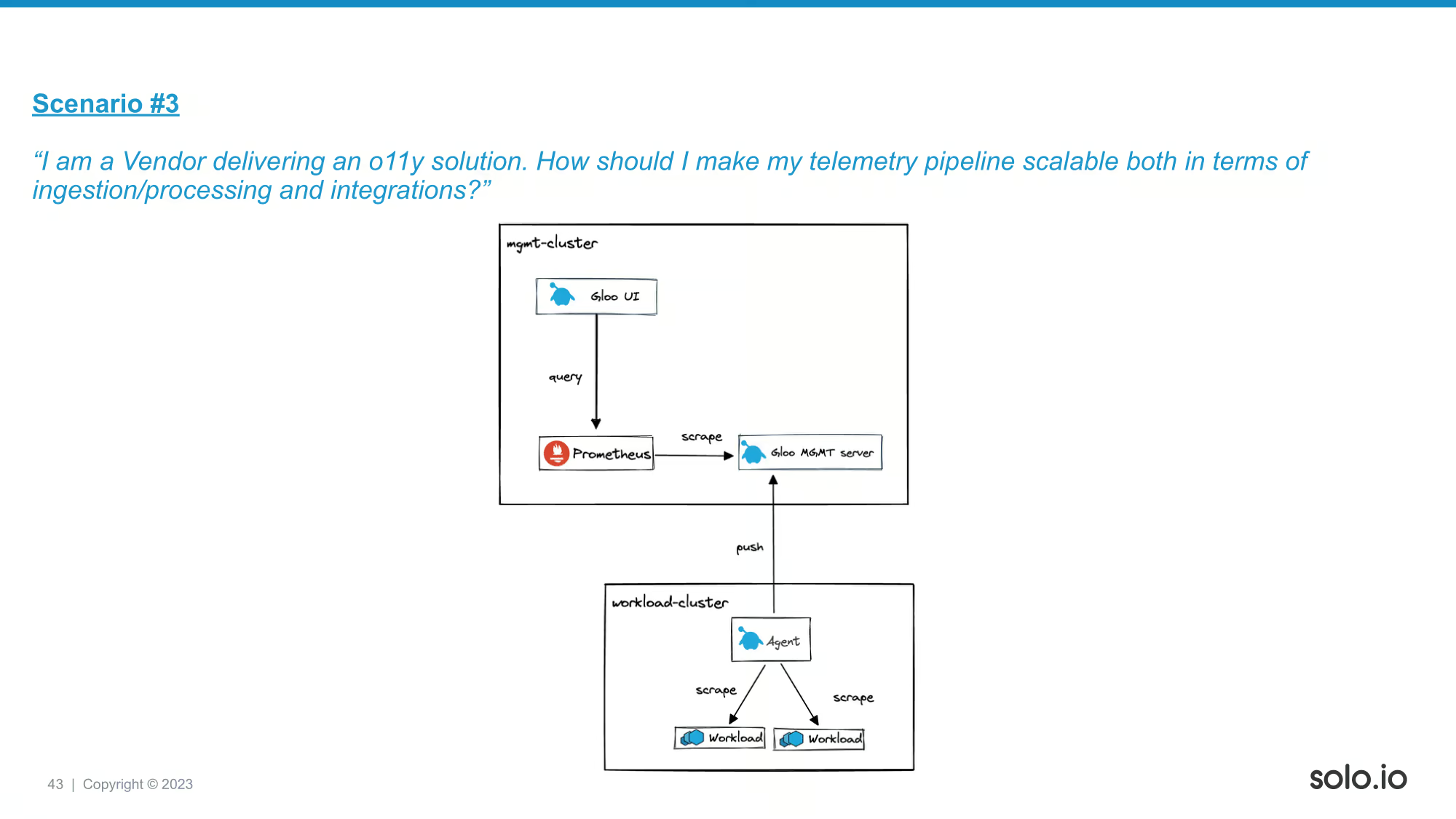

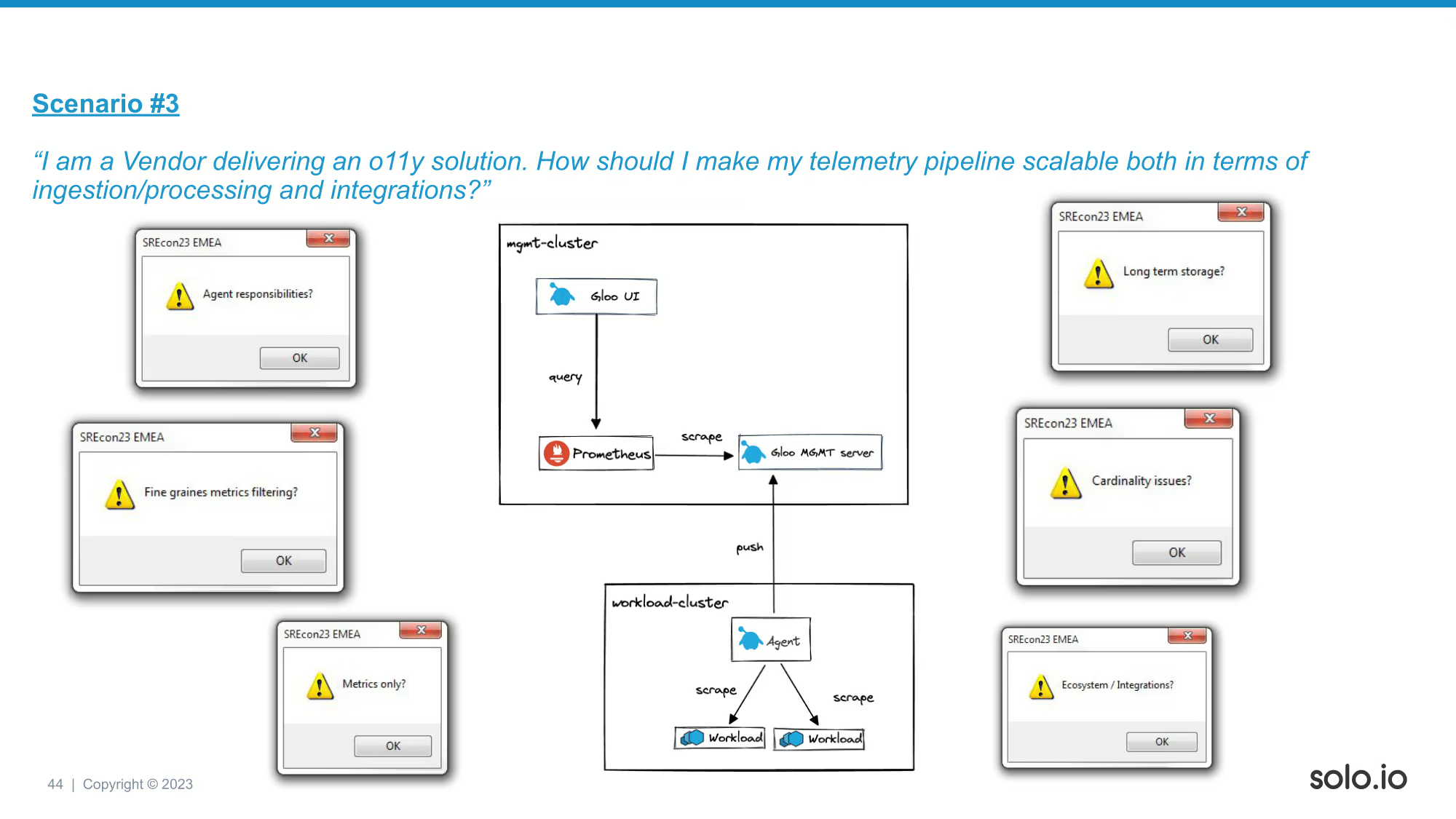

At Solo.io we have an orchestrator layer built on top of service meshes and API gws. We have access to a huge amount of telemetry data. You can see our initial approach to collect all these. We usually have a mgmt cluster, and the workload cluster is what’s running the user’s business applications. We originally had our Agent to collect telemetry + do its other responsibilities as well. Then we had some problems.

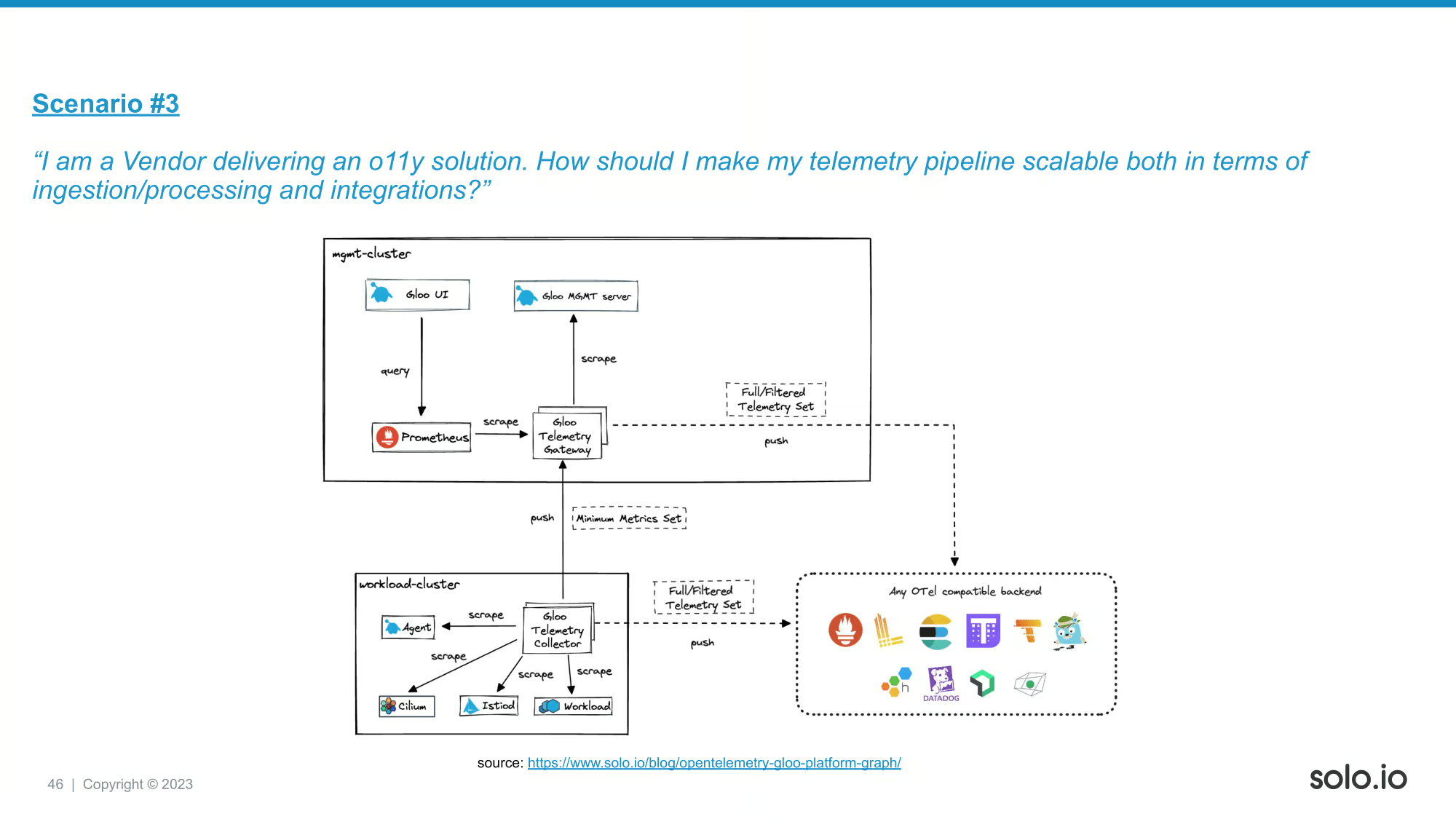

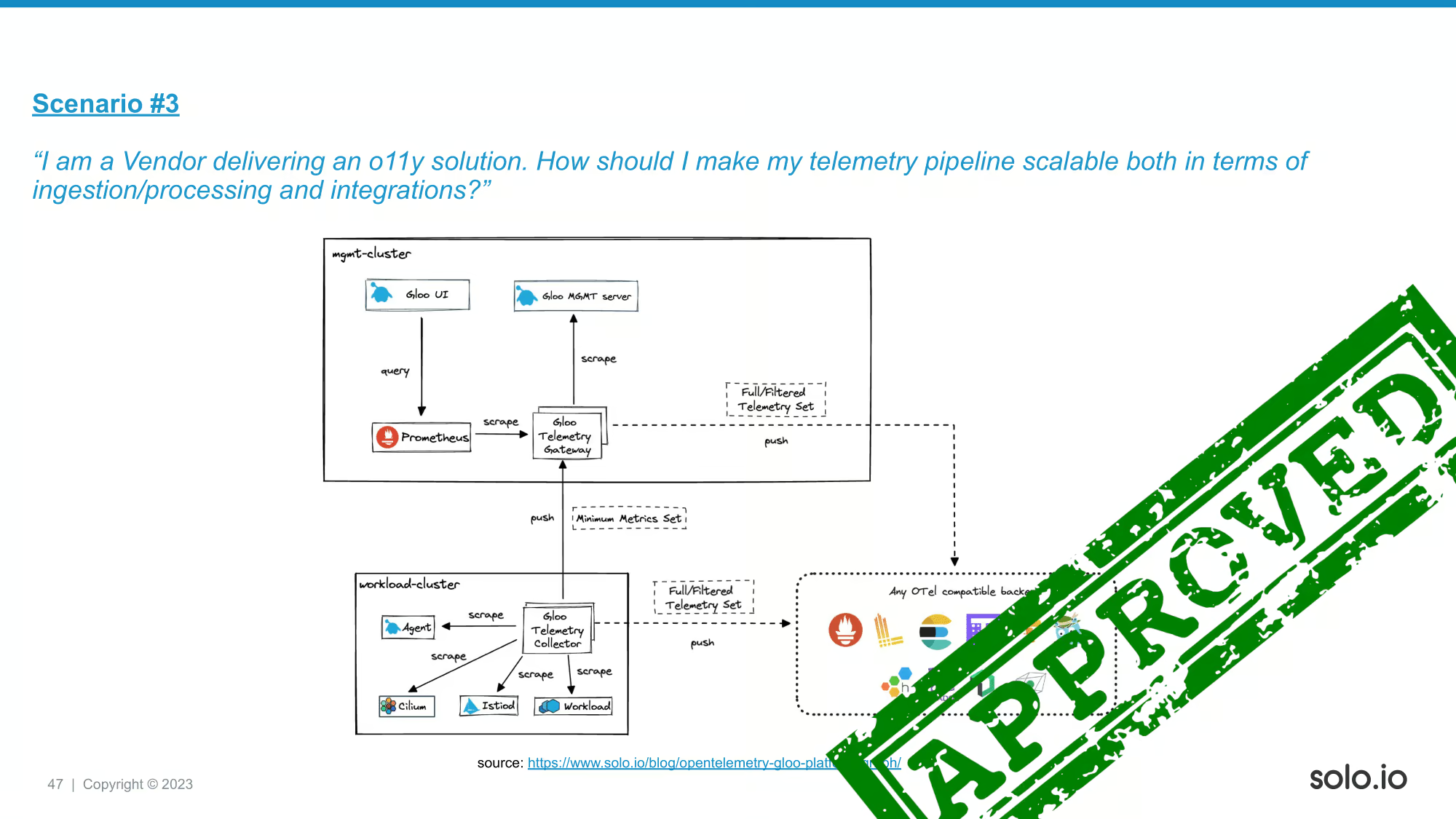

Then, we switched to this OTel collector based architecture. Here we are running our own build of OTel collectors that we can easily customize to collect all kinds of telemetry signals. We can also integrate will all the otel compatible backends out there. As a vendor, it definitely made sense for us to leverage OTel and its ecosystem to solve all these challenges instead of building yet another homegrown solutions to meet all these needs. You can find a link to a blogpost on this slide if you would like explore more detailed blog post to see how OTel drives our service graph.

Overall, I’d say I’d definitely recommend repackaging OTel collectors for this purpose, as the alternative is to reinvent the wheel and create a solution without all the benefits of using the existing developer ecosystem around OTel tooling.

These are all cliches, but still important engineering principles to go by everyday!

If you are interested in a talk about an OTel migration user journey, I recommend checking the talk at 10:10 as well. Take care, thanks for coming!